CCSE Home |

Overview |

AMReX |

People |

Publications |

Astrophysics & Cosmology

CCSE Members of the Astrophysics & Cosmology Teams

CCSE is involved with two DOE-sponsored projects in computational astrophysics and one in computational cosmology.

The ExaStar project (ECP) is working to deliver an efficient, versatile, and portable code suite for multi-physics astrophysics simulations run on exascale machines. The primary simulation codes used by CCSE for this project are CASTRO and the Starkiller Microphysics routines, described below.

The SciDAC-TEAMS project is a collaboration that aims to study the astrophysical events responsible for the production of many of the heaviest of the chemical elements using realistic simulations containing the most complete physics available. The primary simulation code used by CCSE for this project is MAESTROeX, described below.

These projects are in collaboration with Michael Zingale at Stony Brook University.

CASTRO: Compressible Astrophysics

Castro is hosted at http://www.github.com/AMReX-Astro/Castro

You will need AMReX to build Castro -- you can download AMReX from http://www.github.com/AMReX-Codes/amrex

Much more information about Castro is available at http://AMReX-Astro.github.io/Castro.

Please visit github to download Castro and AMReX to begin.

Science with Castro

|

Type Ia Supernovae from White Dwarf Mergers: A volume rendering of the density after the merger of a 0.6 and 0.9 solar mass white dwarf. This image is from a calculation that was performed on ORNL's Titan supercomputer. This simulation was performed by Max Katz and Michael Zingale of Stony Brook University |

|

Flame Evolution in X-ray Bursts from Neutron Stars A movie of helium flame spreading on a neutron star surface in a study of the flame dynamics in X-ray bursts. Shown is the out-of-plane velocity in a 2D calculation that uses AMR to capture both the vertical and lateral dynamical length scales at 10 cm resolution on the neutron star surface.

|

MAESTROeX: Low Mach Number Astrophysics

|

Many astrophysical phenomena of interest occur in the low Mach number regime, where the characteristic fluid velocity is small compared to the speed of sound. Some well-known examples are the convective phase of Type Ia supernovae, classical novae, convection in stars, and Type I X-ray bursts. Such problems require a numerical approach capable of resolving phenomena over time scales much longer than the characteristic time required for an acoustic wave to propagate across the computational domain. |

MAESTROeX is freely available for download at http://www.github.com/AMReX-Astro/MAESTROeX

You will need AMReX to build MAESTROeX -- you can download AMReX from http://www.github.com/AMReX-Codes/amrex

Much more information about MAESTROeX is available at http://AMReX-Astro.github.io/MAESTROeX.

Please visit github to download MAESTROeX and AMReX to begin.

Nyx: an adaptive mesh, N-body compressible cosmological hydrodynamics simulation code

|

Nyx is hosted at

http://www.github.com/AMReX-Astro/Nyx

with more information about Nyx at |

StarKiller Microphysics

StarKiller Microphysics is a set of publicly available microphysics modules designed to enable simulations of stellar explosions. Microphysics is not a stand-alone code. It is intended to be used in conjunction with a simulation code. The original design was to support the AMReX codes CASTRO and MAESTROeX. These all have a consistent interface and are designed to provide the users of those codes an easy experience in moving from the barebones microphysics modules provided in those codes. The microphysical components we currently deal with are the equation of state (EOS) and the nuclear burning network.

Features

|

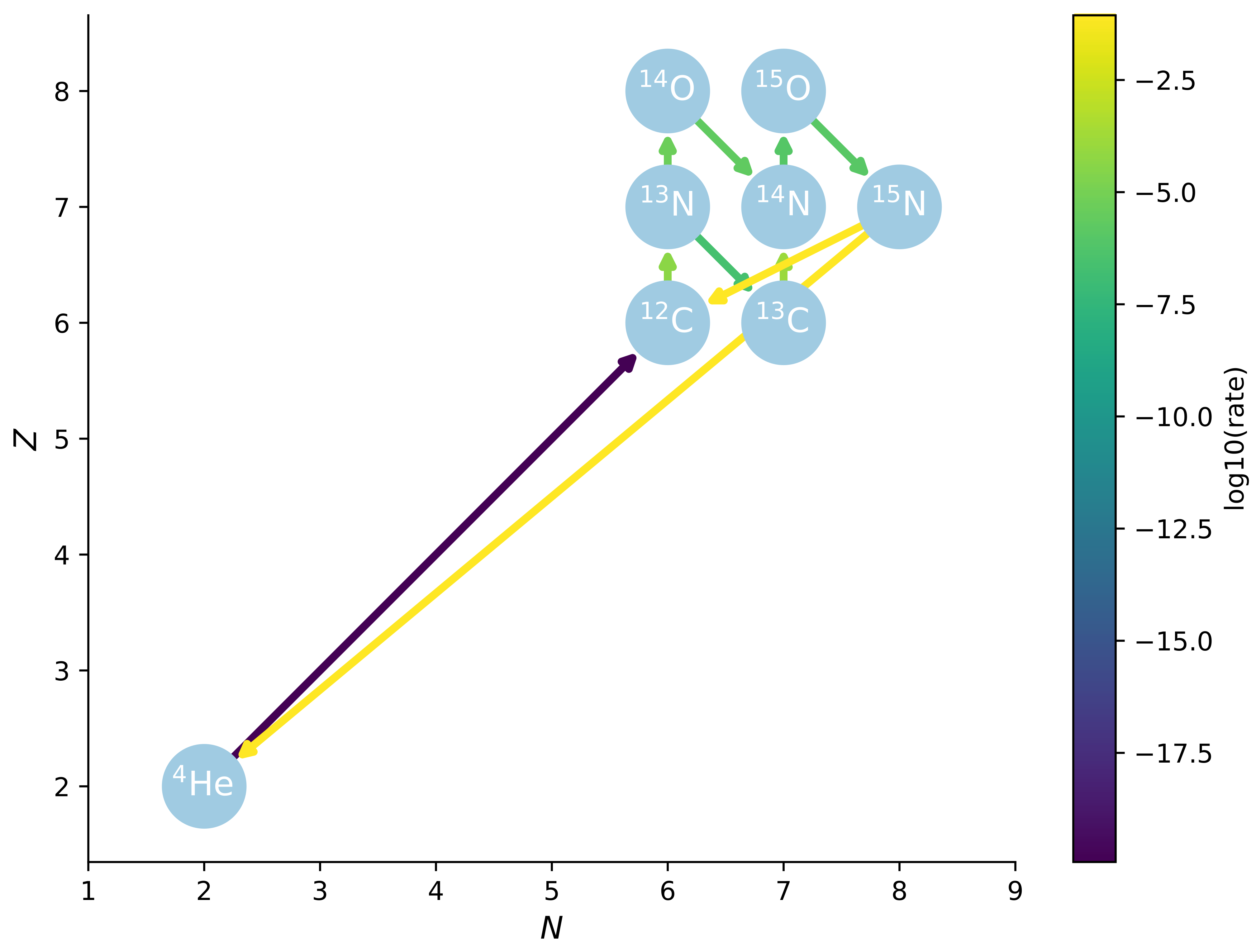

StarKiller Microphysics supports arbitrary reaction network construction using pynucastro, an open source python interface to the JINA Reaclib nuclear rate database. Shown at left is a CNO network for hydrogen burning constructed and visualized using pynucastro. Ongoing work includes large network optimization, wider support for tabulated weak rates, and additional nuclear physics. |

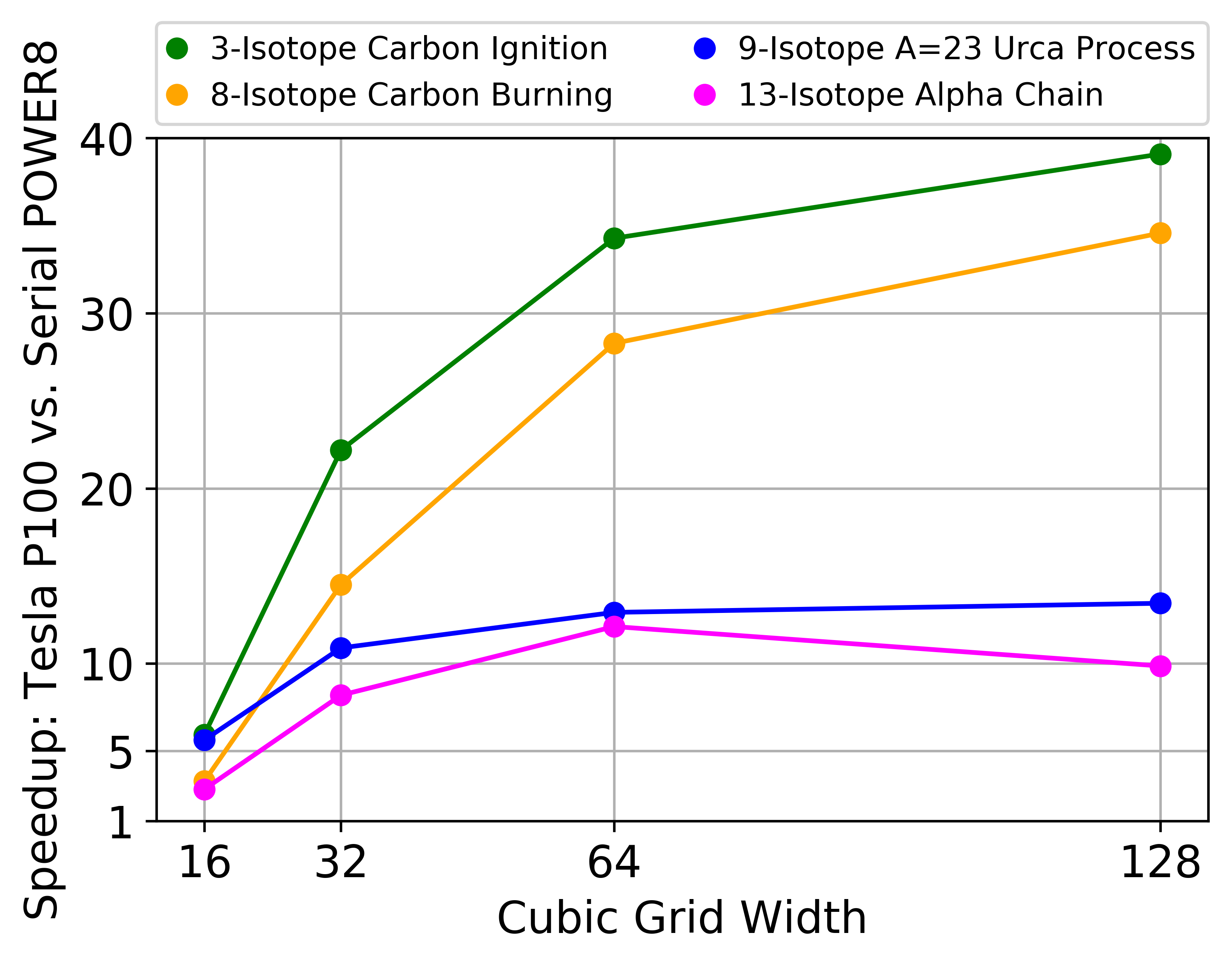

| StarKiller Microphysics also supports GPUs using CUDA Fortran for EOS calls and ODE integration for the nuclear reaction networks. Shown at right is a sample speedup obtained for implicit ODE integration with various reaction network sizes on the NVIDIA Tesla P100 architecture using our CUDA Fortran port of VODE. Ongoing work aims to reduce the working memory footprint of the integrator and network modules for GPU optimization using larger networks. |

|